Opening Keynote

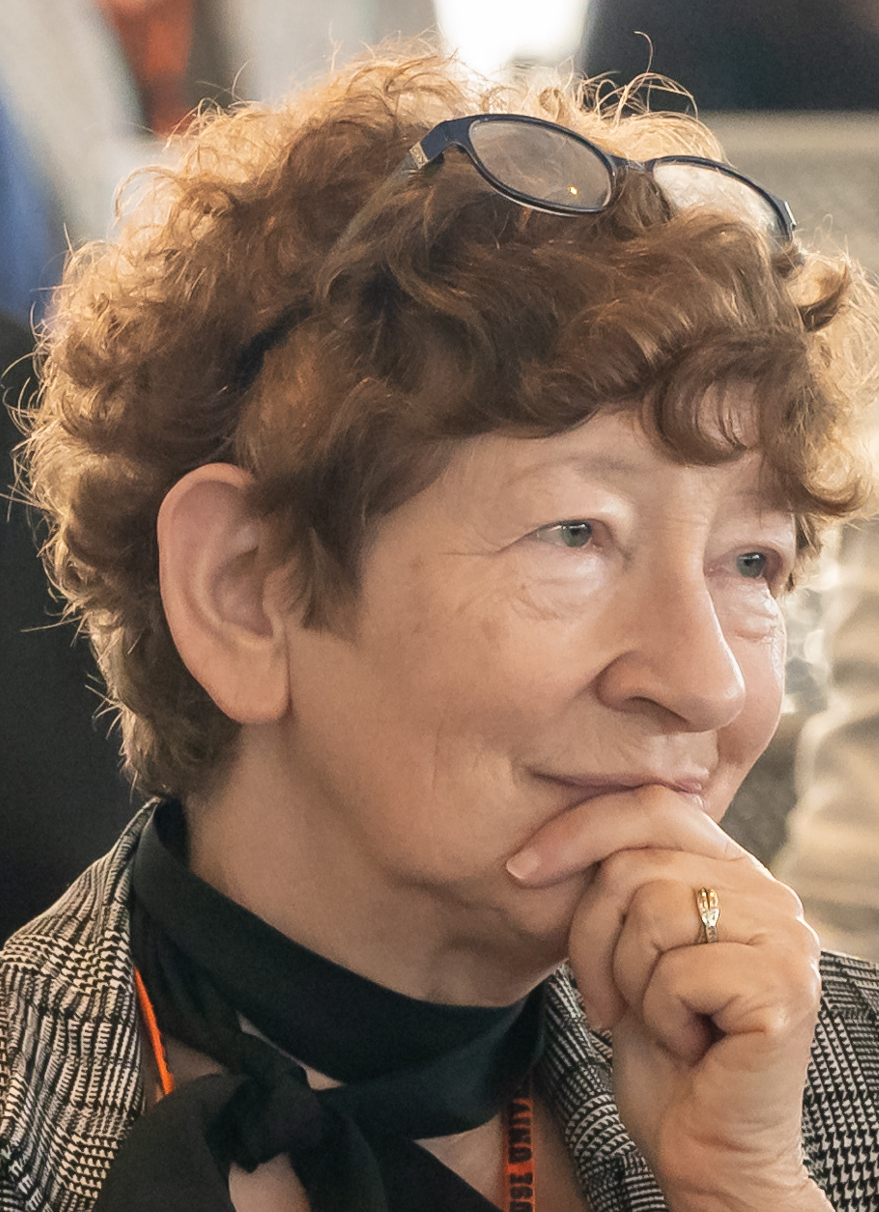

Elisabeth André

Engineering Socially-Interactive AI

In recent years, considerable efforts have been made to improve the expressive behaviors of artificial interactive agents, ranging from animated virtual characters and anthropomorphic robots to digitally enhanced everyday objects. Experience shows, however, that realistic appearance and animation alone do not suffice to maintain long-term engagement beyond an initial novelty effect. Motivated by the goal of enabling smooth and natural interactions, this talk discusses the challenges associated with engineering socially-interactive AI. I focus on three core properties: Social Perception, Socially-Aware Behavior Synthesis, and Learning Socially-Aware Behaviors. Particular emphasis is placed on temporal coordination, including the concurrent generation of appropriate system responses, such as backchannels, while the interaction is ongoing.

Elisabeth André is a full professor of Computer Science and Founding Chair of Human-Centered Artificial Intelligence (AI) at Augsburg University in Germany. She has a long track record in multimodal human-machine interaction and AI-based human behavior analysis with applications in social coaching, psychotherapy and mental health diagnosis. Her work has won many awards including the Gottfried Wilhelm Leibnitz Prize 2021 of the German Research Foundation (DFG), with 2.5 Mio € the highest endowed German research award. In 2017, she was elected to the CHI Academy, an honorary group of leaders in the field of Human-Computer Interaction. To honor her achievements in bringing Artificial Intelligence techniques to Human-Computer Interaction, she was awarded a EurAI fellowship (European Coordinating Committee for Artificial Intelligence) in 2013. In 2019, she was named one of the 10 most influential figures in the history of AI in Germany by National Society for Informatics (GI). Elisabeth André is a member of the Bavarian Academy of Sciences and Humanities, the Academy of Europe, the Asia-Pacific Artificial Intelligence Association, the National Academy of Science and Engineering acatech and the German Academy of Sciences Leopoldina. Furthermore, she is a fellow of the European Laboratory for Learning and Intelligent Systems (ELLIS).

Mid Keynote

Luis A. Leiva

The adaptive turn in user interfaces

Adaptive interfaces can adjust their structure, behavior, or content to better match the user, the task, and the interaction context. However, poorly designed adaptations can do more harm than good. Furthermore, adaptive systems rely heavily on personal data, raising ethical concerns around user's privacy. This talk revisits the long-standing vision of adaptive user interfaces, examines the technological and human factors driving their resurgence, and discusses the open challenges that must be addressed to make adaptive interaction truly beneficial. Ultimately, as computing environments become more personalized, the question is no longer whether interfaces should adapt, but how we design adaptive systems that remain understandable, trustworthy, and human-centered.

Luis A. Leiva is Associate Professor of Computer Science at the University of Luxembourg, where he leads the Computational Interaction research group. His research interests lie at the intersection of Human-Computer Interaction and Machine Learning. He is a professional member of the Association for Computing Machinery (ACM) and the European Lab for Learning and Intelligent Systems (ELLIS). He is a regular program committee member and organizer of major ACM conferences, including for example CHI, IUI, MobileHCI, SIGIR, CIKM, CUI, and ICMI. He currently serves on the editorial board of the International Journal of Human-Computer Studies and the Machine Learning with Applications journal. He is also the recipient of several awards and recognitions both from industry and academia, including significant funding from the European Innovation Council.

Closing Keynote

Margaret Burnett

Outside the box in the age of AI

As AI-powered software becomes more and more prevalent, more and more individuals — from every walk of life, at every level of education, across the entire socioeconomic spectrum, and of every gender, race, ethnicity and age — are being affected by it. How does software engineering need to change to make AI-powered applications help all those people and you, as opposed to escalating harms to them and to you?

To address this challenge, we need to get outside the box in how we think about software engineering of AI-powered applications and with them. In this talk, I explore how research from End-User Software Engineering, from Cognitive Sciences, and from Explainable AI (XAI) can bring new ideas to what engineering complex systems in the age of AI needs to accomplish and how.

Margaret Burnett is a University Distinguished Professor at Oregon State University. Her research focuses on people who are engaged in some form of software development. She was the principal architect of the Forms/3 and FAR visual programming languages, and co-founded the area of end-user software engineering, which aims to improve software for computer users who are not trained in programming. Her end-user software engineering work included producing seminal work in actionably explaining AI to ordinary end users. She co-leads the team that created the GenderMag method (a software inspection process that uncovers gender inclusiveness issues in software), the SocioeconomicMag method, the InclusiveMag meta-method, and a new analytical approach to intersectionally inclusive software.

Burnett is an ACM Fellow, a member of the ACM CHI Academy, and an award-winning mentor. She has served in over 50 conference organization and program committee roles, and is currently on the Editorial Board of ACM TOSEM and ACM TiiS. She was recently honored with the IEEE CS TCSE Distinguished Women in Science and Engineering (WISE) Leadership Award and the Grace Hopper Conference's ABIE Tech Leader Award. Website: https://web.engr.oregonstate.edu/~burnett/.